How Much Compute Does China Have?

a deep dive

How much compute does China have? Despite its all-important relevance to American export controls, the AI race between the U.S. and China, and national security, this question remains unanswered.

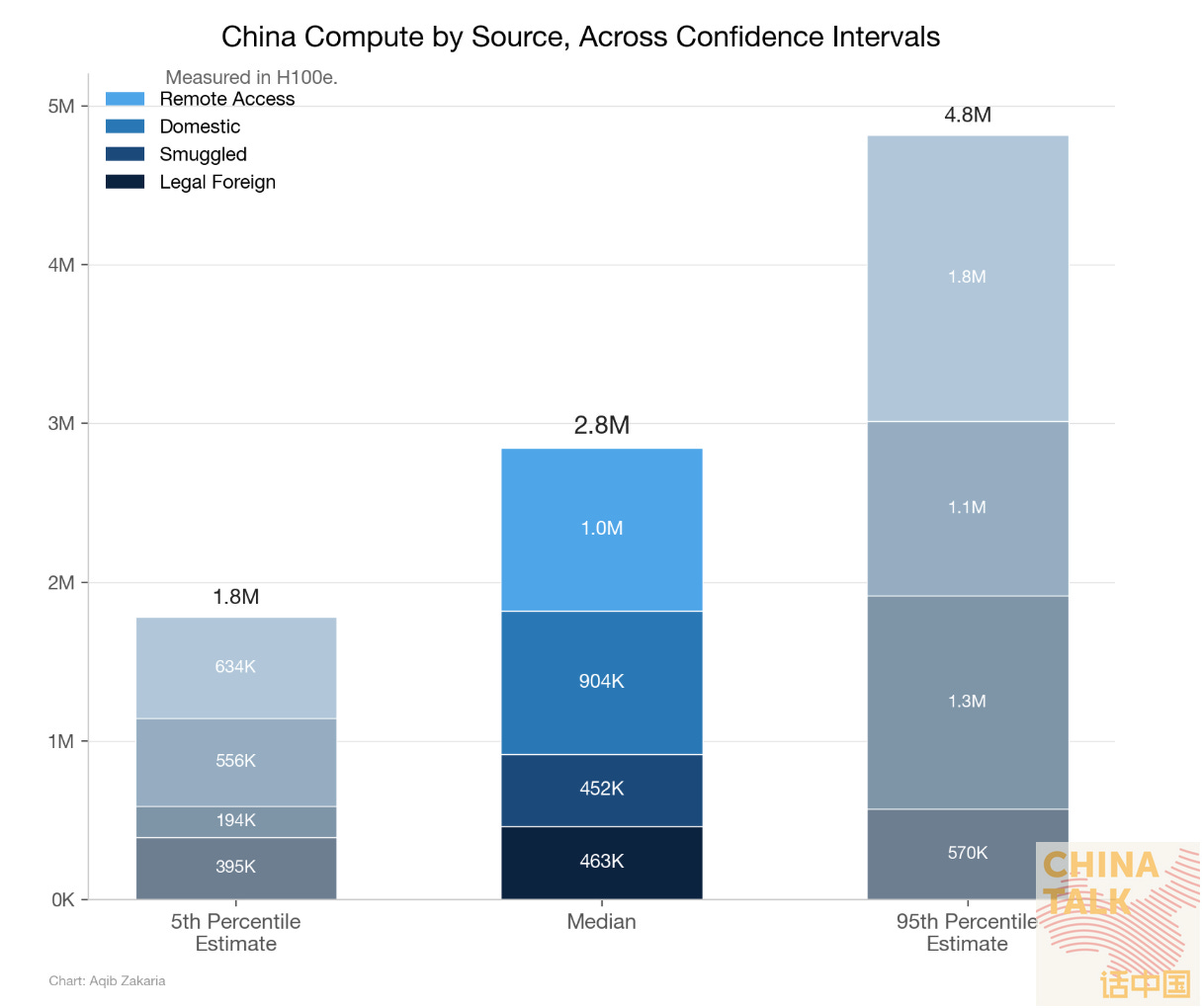

Today and tomorrow, ChinaTalk will attempt to answer the question using two very different methods. Today’s article attempts to estimate China’s compute via a bottom-up (supply-side) approach. This piece will try to brute-force count every chip procured through every possible means. Tomorrow’s article by Nick Corvino attempts a demand-side approach. That piece tries to deduce the amount of compute China has based on the needs of training and serving the country’s models. The two articles, hopefully, provide a solid range of estimates that inform policymakers, but also future researchers attempting to understand China’s compute supply.

The work for the two articles was conducted independently, and only after completion did we compare notes. Surprisingly, despite large uncertainties on both sides, we arrived at nearly the same number! Both estimates were roughly 2.8 million H100e, and the convergence of estimates suggests we may be on the right track.

A disappointing disclaimer: the answer is unknowable for any tight ranges. Although both pieces get to a number — one that we are confident is correct within an order of magnitude — we would be shocked if it were accurate far beyond that. The biggest reasons for high variability on the supply-side are twofold: lack of understanding how much compute China accesses remotely, and the inherent opaqueness of chip smuggling operations.

Ultimately, this analysis should not have required this much guesswork. Without a concrete answer, the success of our export control regime and national security framework, as well as our perceptions of our advantages against China, can only be based on hunches. A credible number is needed to understand how well export controls are working, to what extent we are ahead of China, and to track China’s behavior.

Such high variability in this estimate should inspire the U.S. government to adopt mechanisms that enable us to monitor our adversary. These mechanisms include the ability to peer into the operations of hyperscalers, neoclouds, and all forms of cloud-service providers (CSPs). Even if not to obstruct their operations, the U.S. government cannot know how China is using and abusing compute without this information. A Know Your Customer scheme or something similar is required to enforce the policies we have already implemented and to know how they are being circumvented. We hope that the U.S. secretly maintains a credible estimate via aforementioned methods or other channels; however, in the case that this work is not redundant, it will serve as an important tool for policymakers and China Hands alike.

For maintaining standardization across chip generations, this piece quantifies compute as “H100-equivalents,” or H100e. H100e is measured by dividing the peak operations per second of a chip at FP8/INT8 by the H100’s specification. This is the method used by Epoch AI. It is important to note that simply calculating FLOPS does not give the whole story of a chip; elements like memory bandwidth, memory capacity, and software are critical for a chip’s performance and usability. Until we have something better, though, FLOPS are what we have and what we will use.

Bottom-Up (Supply-Side) Calculation

From a supply-side calculation, China’s compute can be understood as the number of chips within China plus the number of chips China can remotely access abroad. The former category can be further broken down as the number of chips China has legally purchased from abroad plus the number of chips China has illegally purchased from abroad (smuggled chips) plus the number of chips China has produced domestically.

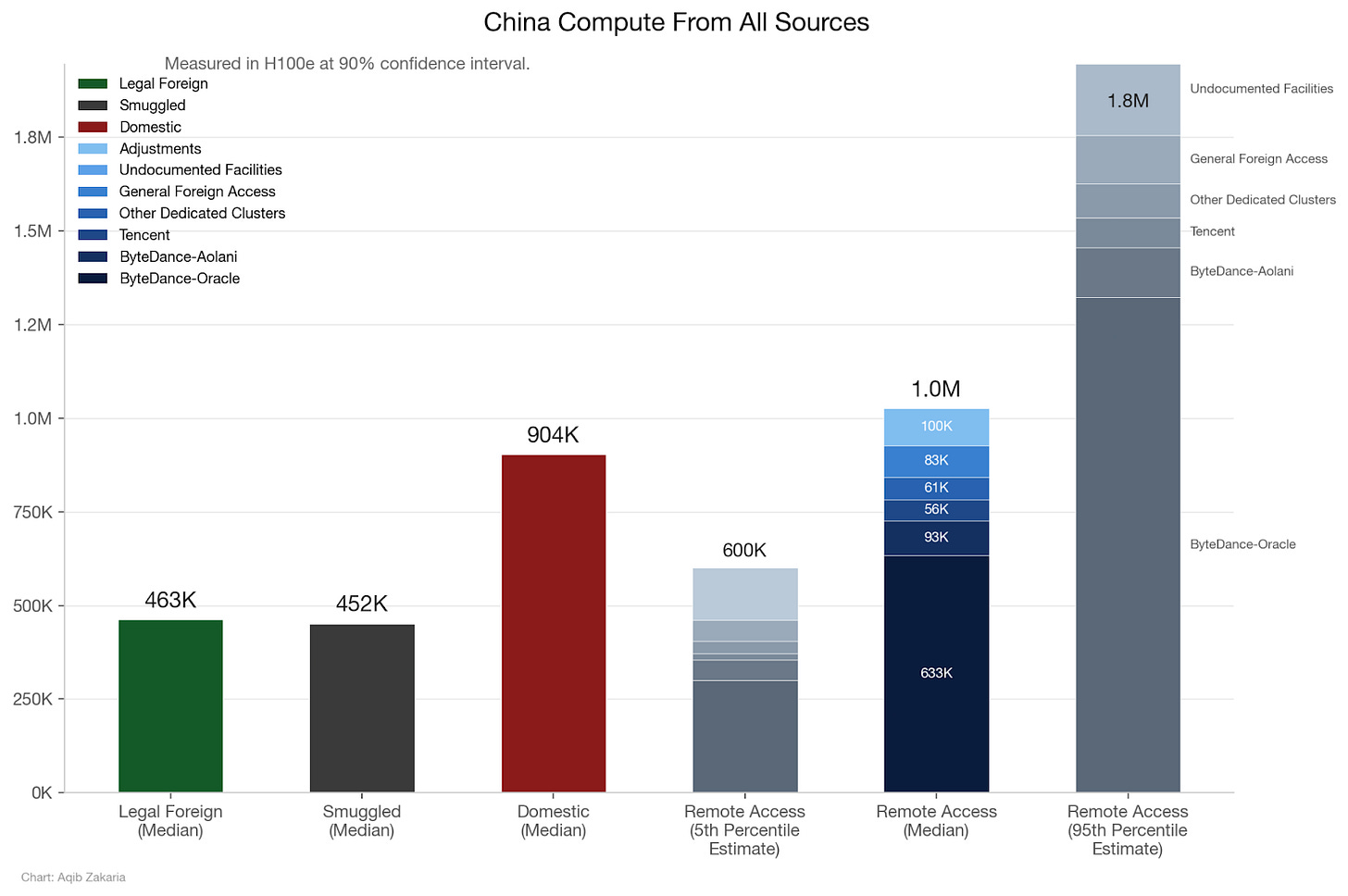

From this calculation method, this piece approximates China’s compute supply to be about 2.8 million H100e, with 90% confidence in a range of 1.8 million H100e to 4.8 million H100e. The bulk of this comes from compute from domestic companies and compute remotely accessed via the cloud, but both legal purchases from foreign vendors and smuggled chips play a non-negligible role.

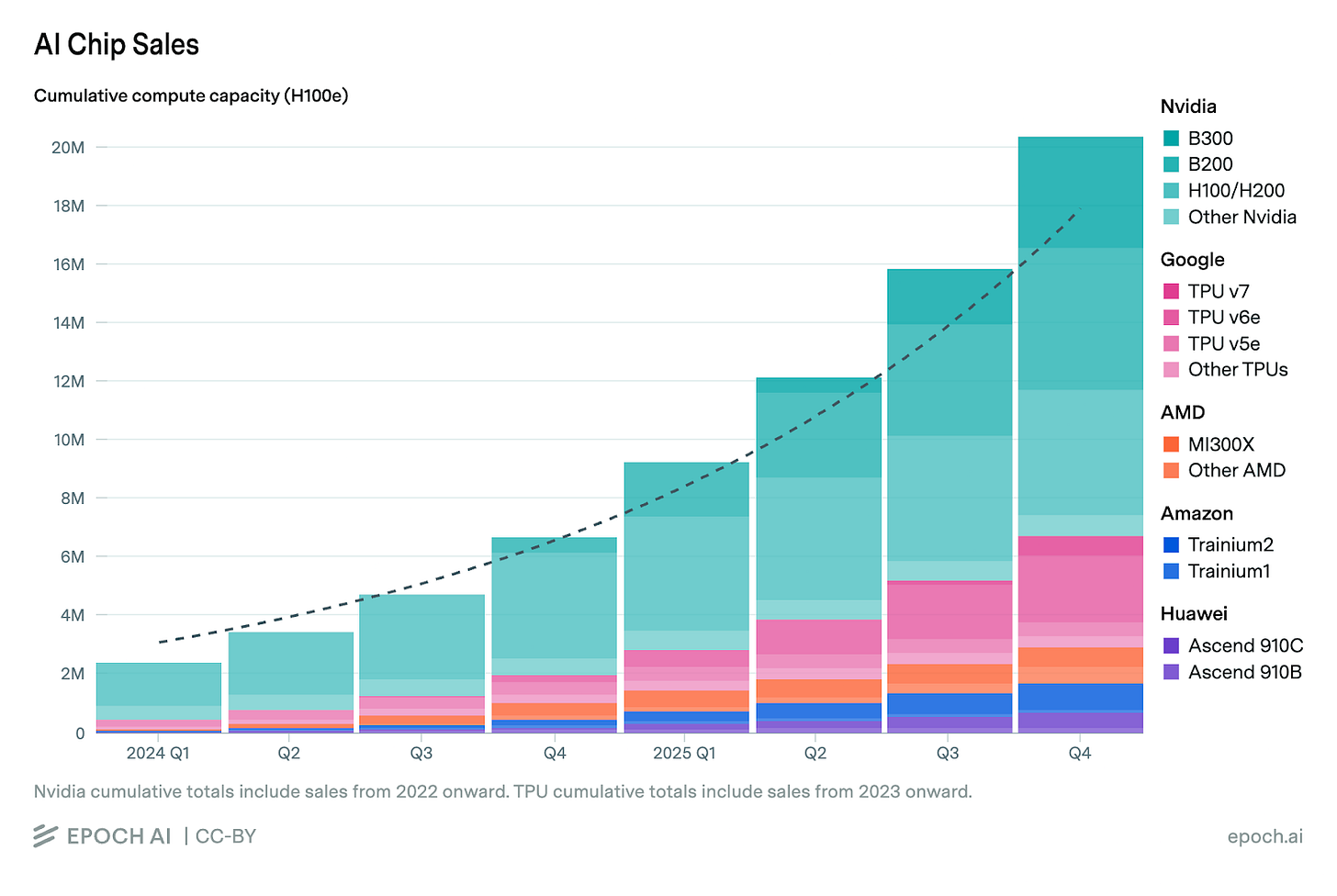

For context, Epoch AI estimates the cumulative compute by leading chip designers to total 20 million H100e. This suggests that China has access to about an eighth of the world’s compute.

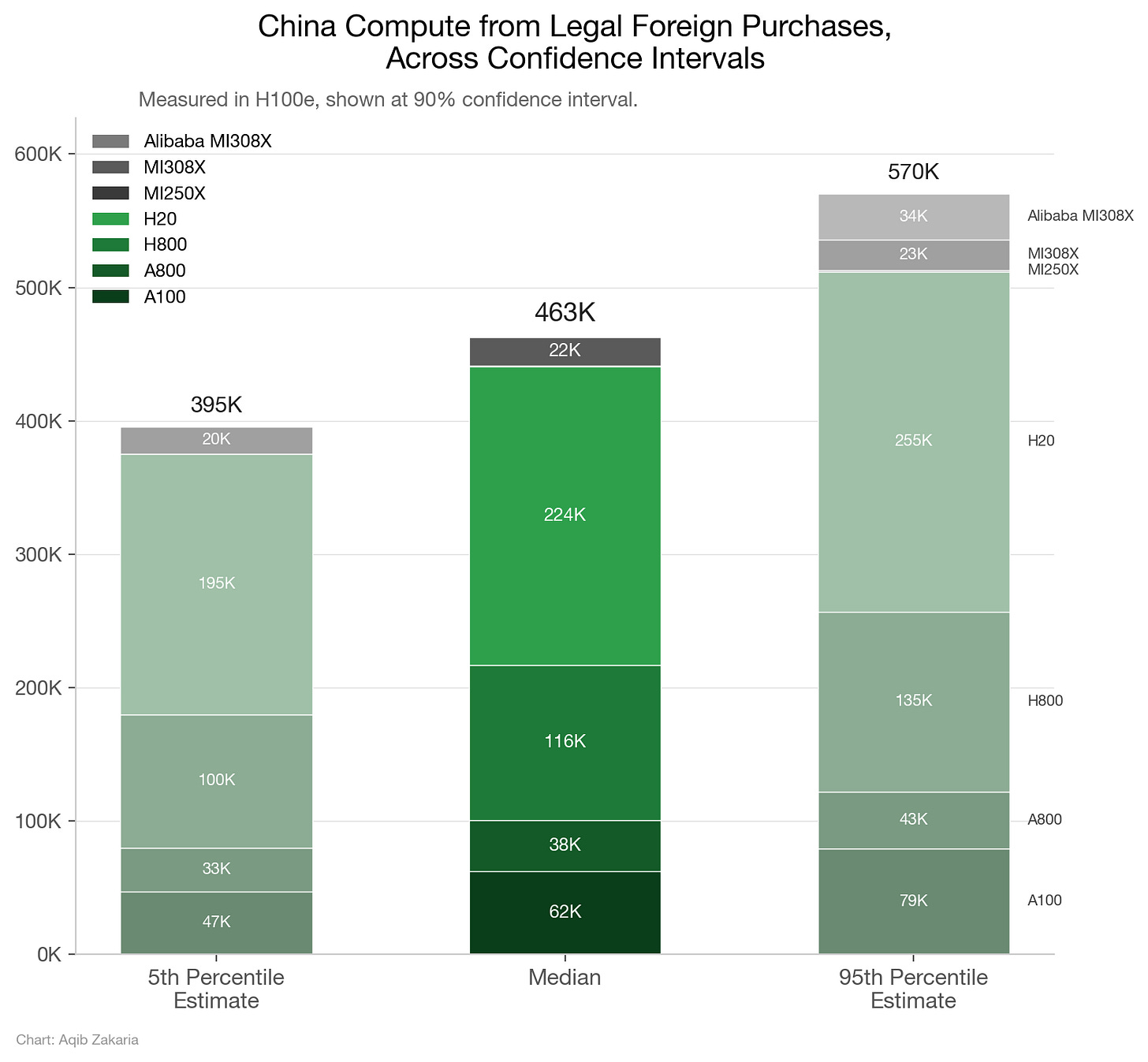

Legal Foreign Compute

Starting with foreign, legally purchased chips, China has placed its largest orders with Nvidia and, to a lesser degree, AMD. Other specialized chips, like TPUs and AWS Trainiums, have typically been tied to specific hyperscalers or platforms and, thus, nonexistent on Chinese soil. Intel’s Gaudi line, while not specialized, was a commercial failure with no confirmed Chinese buyers.

Prior to BIS’s October 2022 export controls, Nvidia’s A100 — released in 2020 — was legal for purchase. During that two-year period, China likely purchased around 197,789 A100s (62,363 H100e). Similarly, China likely purchased roughly 3,000 MI250Xs (582 H100e), the AMD equivalent of the A100. These numbers have the loosest confidence intervals, as A100s and MI250Xs were destined for the global market and estimating the breakdown of distribution to China is an imprecise science. However, the legal Nvidia sales of models after the A100 were for China-specific designs, giving us greater confidence. The numbers given reflect EpochAI’s data, which are at a 90% confidence interval.

The October 2022 export controls restricted the sales of A100s and other AI chips based on the criteria of network bandwidth and arithmetic performance. To circumvent these restrictions, Nvidia produced the A800 and H800 for the Chinese market. These chips, roughly equivalent to the A100 and the H100 in arithmetic performance, were made with downgraded network bandwidth so as not to be restricted. BIS revised its export controls to restrict the A800 and H800 in October 2023, but during this one-year period, Chinese customers were able to procure roughly 121,077 A800s (38,175 H100e) and 116,423 H800s (the same in H100e).

After October 2023, the regulations on arithmetic power were far stricter, so Nvidia developed the H20 for the Chinese market. The H20 possessed about 15% of the arithmetic power of its contemporary H200, though the chip was designed with outsized memory bandwidth capabilities, making it ideal for AI inference applications. The H20 indicates the shortcomings of the H100e methodology: despite its low performance in H100e terms (a unit better designed for measuring training strength), the H20 is arguably more powerful than the H100 for serving inference. Regardless, the H20 was sold until April 2025, during which China likely purchased 1,495,352 H20s (223,658 H100e). During that same period, AMD’s equivalent of the H20, the Instinct MI308X, was produced but sold in much smaller quantities. To date, Epoch AI estimates that Chinese buyers have purchased 32,500 Instinct MI308X (21,523 H100e).

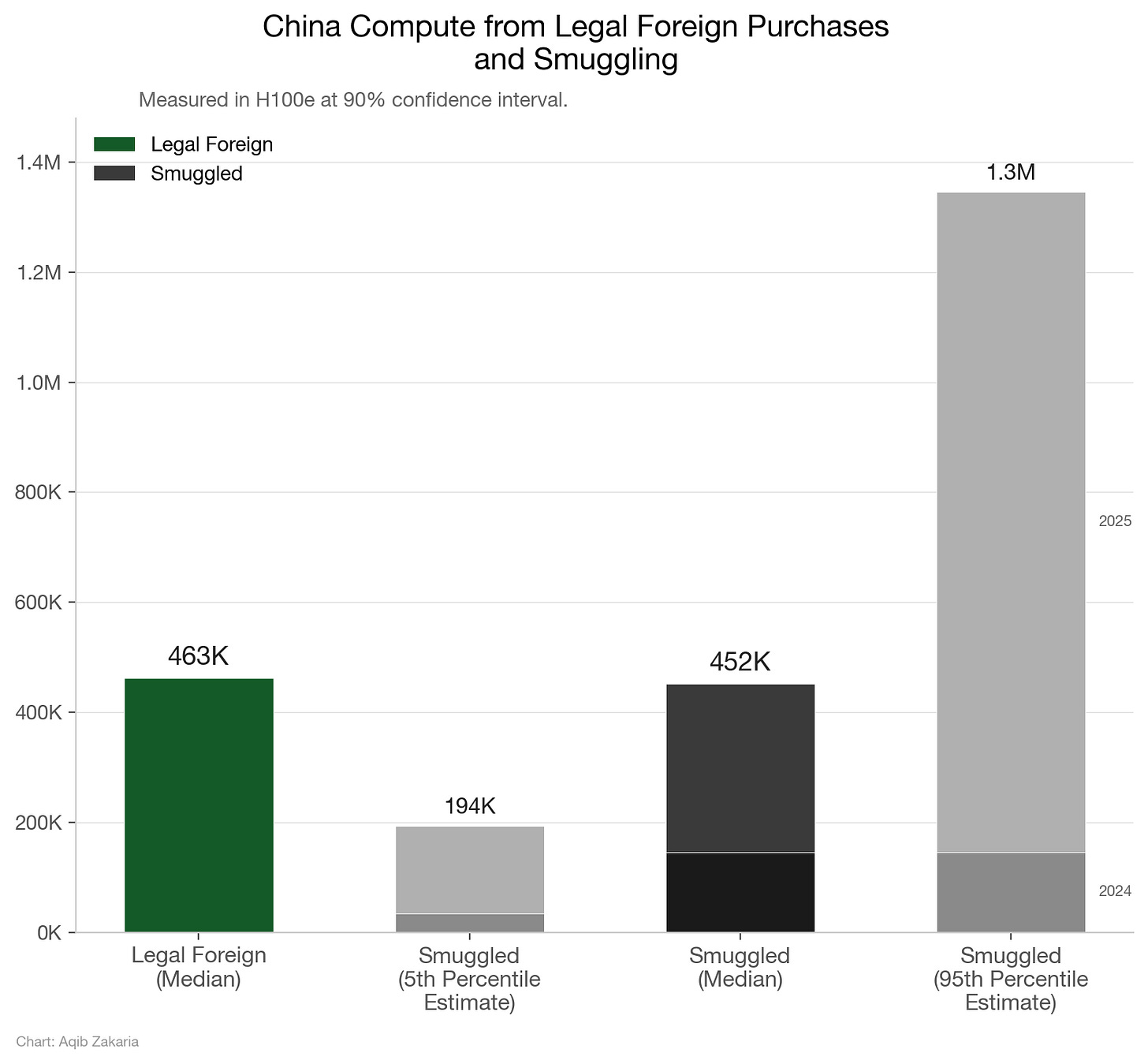

In all, legal purchases from foreign providers account for over 460,000 H100e, with a 90% confidence in a range of 395,000 H100e and 570,000 H100e. The range is predominantly due to the uncertainty on A100 sales, but the full error bar analysis is here. These legal purchase calculations do not include pending orders for Nvidia’s H200 and AMD’s MI325X, as these orders have yet to be confirmed and seem to be in regulatory limbo. It also does not include Alibaba’s pending order for the MI308X, though this is accounted for in error bars. Processing such orders could drastically enlarge this total, depending on the quantities allowed to Chinese customers. Of all the categories of compute we estimated, legally purchased compute from foreign companies is the calculation we have the most confidence in. The companies selling compute to China are all public, and gleaning Chinese compute purchases from their filings is relatively easy compared to subsequent calculations.

Smuggled Compute

Besides legal purchases of foreign chips, some amount of China’s compute comes from chips illegally smuggled into the country. These are usually high-power chips restricted by export controls, such as Nvidia’s H100, H200, and newer B200.

Until the end of 2023, China had legal access to powerful Nvidia chips. Even the A800 and H800 were barely downgraded compared to their originals, but were legal due to poor export control design. Thus, important amounts of smuggled chips likely accumulated beginning in 2024.

CNAS reports a median estimate of 140,000 chips smuggled into China for 2024, with 90% confidence in a range of about 17,500 chips to 780,000; because actors are incentivized to smuggle the best chips — not legal ones or generations that are just not worth the effort — 2024 chips are considered to be predominantly H100 and H200s. The Blackwell series was not being shipped until the very end of 2024. Thus, roughly 140,000 H100e were smuggled into China in 2024.

In 2025, the amount of compute smuggled into China was likely larger than in 2024, due to two factors: increased power of chips and greater need. The Blackwell series was being shipped in large quantities throughout 2025, and the B200 has approximately 2.5 times the performance of an H100, according to Epoch AI. The Financial Times reported that at least $1 billion worth of Nvidia chips were smuggled into China in one quarter of 2025, with the B200 being the most popular and available offering.

Also, after the H20 was banned in the middle of 2025, Chinese firms had a greater incentive to smuggle chips. While the H20 provided an ample supply of inference compute, its restriction caused a need for Chinese customers to acquire their compute elsewhere — some of which was likely via smuggling.

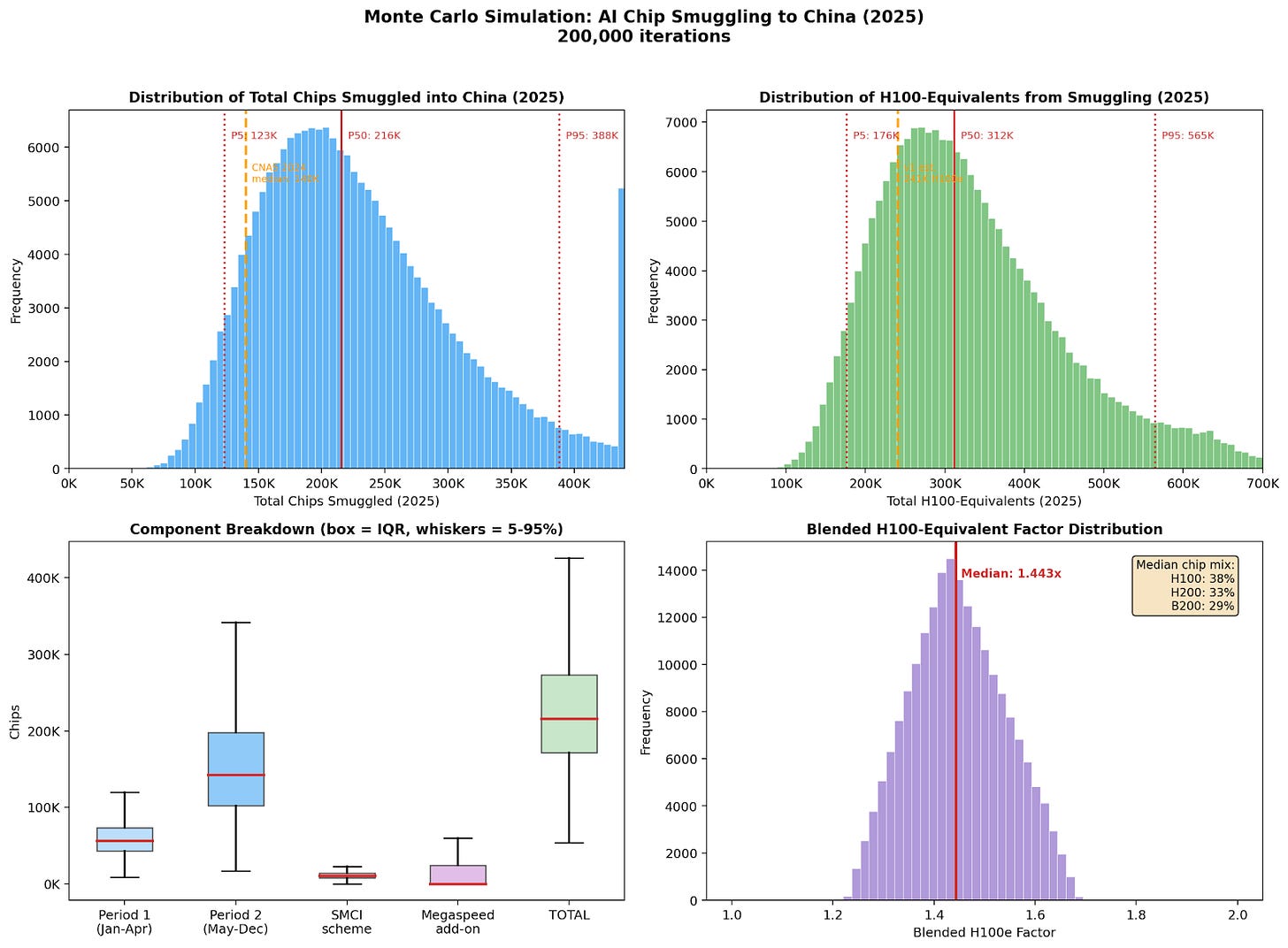

I ran a Monte Carlo simulation (repo here) similar to the one conducted by CNAS in its 2024 estimate, and the results suggest a median of 312,000 H100e were smuggled into China in 2025, with a 90% confidence in a range of 176,000 to 565,000. The results are most definitely not to be taken as gospel, though they attempt to account for the impact of the H20 ban, the emergence of the Blackwell, and reported instances of smuggling like the Financial Times’s report. As a testament to the high variability of this estimate, the recent news of the plot by Supermicro executives to sell $2.6 billion worth of Nvidia chips to China drastically changed the calculation. The simulation estimated a median of 240,000 H100e prior to this news, demonstrating that every new data point can wildly alter the estimate. After the Supermicro news, the lower bound of smuggling was increased, driving the median up to 312,000.

Monte Carlo simulations involve assigning probability distributions to different inputs based on evidence and reports. The simulation rolls a dice 200,000 times randomly picking values for the inputs within their distributions and then tallying the results. What we get is shown below, with a range of possibilities and a somewhat-educated range. It’s high variability and requires many assumptions, but it is the best we have.

The sum of Chinese compute smuggled into the country is likely in the ballpark of 452,000 H100e, most of which was smuggled in the past two years. The 90% confidence range is between 193,500 H100e and 1,345,000 H100e. It is unclear how usable much of this compute is for large-scale clusters, as Nvidia would not service such clusters if the chips were to run into issues. However, the jury is still out on how easily non-Nvidia engineers can fix potential issues with Nvidia hardware.

This estimate also gives rise to a strange conclusion: China was likely able to illegally import as much compute as they were able to legally import. This can be partially explained by the fact that China’s window for legal purchases was narrower than the window for smuggling in higher-performance chips. Further, demand for AI chips was at its lowest in 2020, when the A100 first came on to the market, so the low number of legally acquired chips during that period is understandable.

Homegrown Compute

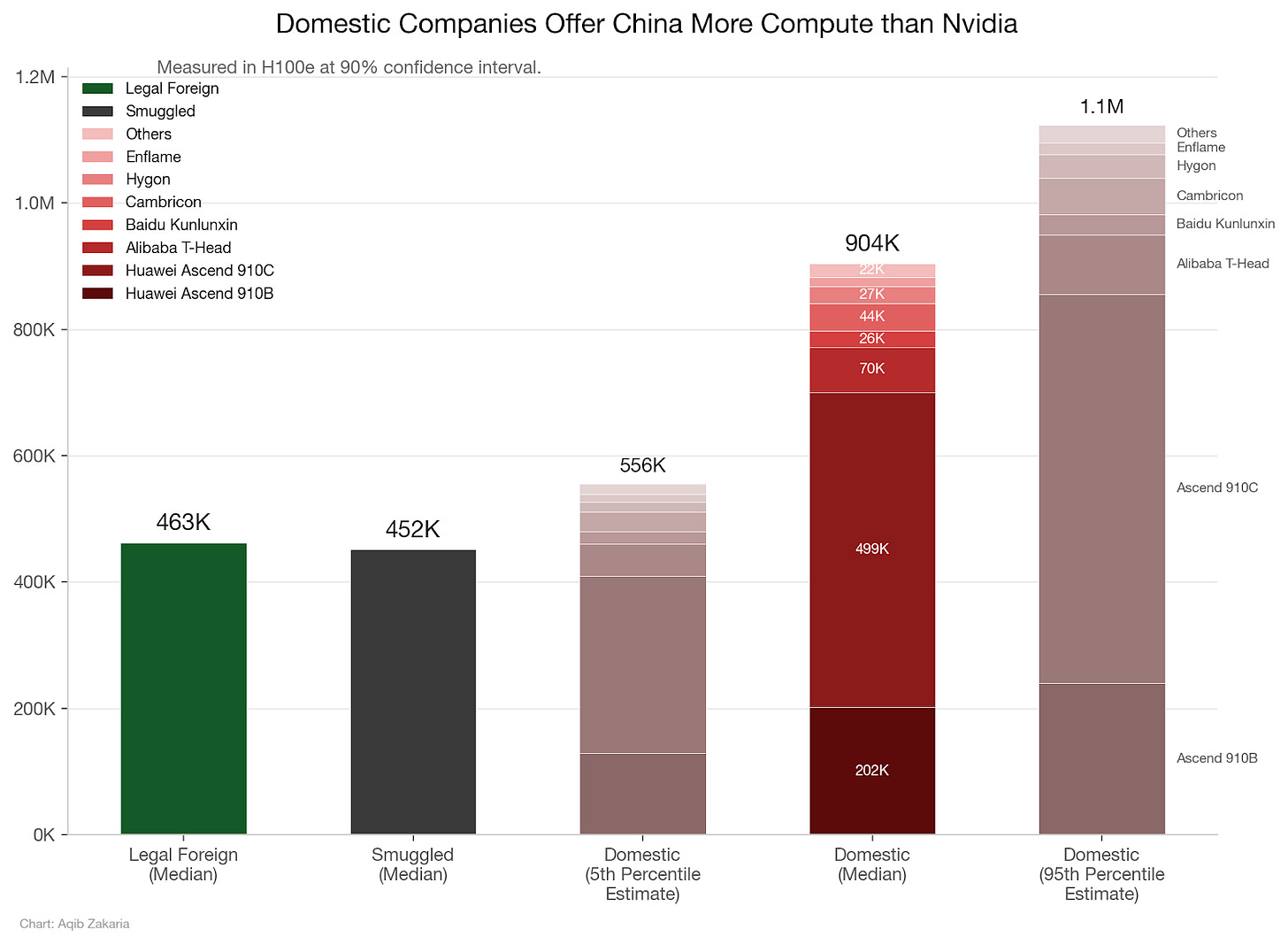

For homegrown compute, China’s champion is Huawei, with its Ascend 910B and Ascend 910C products. Using Epoch AI data, both have been in production since Q1 2024, and China has acquired roughly 600,000 Ascend 910Bs (201,798 H100e) and 650,000 Ascend 910Cs (498,971 H100e).

China also has a competitive AI accelerator industry, which also accounts for a decent chunk of compute. Providers include: Alibaba’s T-Head (平头哥), Baidu’s Kunlunxin (昆仑芯), Cambricon (寒武纪), Hygon (海光信息), Enflame (燧原科技), Moore Threads (摩尔线程), Iluvatar (天数智芯), Biren (壁仞科技), and MetaX (沐曦). Although none individually comes close to Huawei’s scale, some have shipped hundreds of thousands of units, and in the aggregate, they contribute meaningfully to China’s compute supply.

After Huawei, Alibaba’s T-Head is likely the biggest supplier of domestic Chinese compute, with an estimated 470,000 chips (70,500 H100e) sold to date. Next is likely Baidu’s Kunlunxin with an estimated 200,000 chips (25,800 H100e). Then it’s Cambricon with 170,000 chips (~44,030 H100e), followed by Hygon with 160,000 chips (~26,560 H100e).

Other companies have much smaller order numbers. As of 30 June 2025, Iluvatar has shipped roughly 53,000 units (~9,600 H100e) of its AI chips. For roughly the same timeframe, Moore Threads has shipped 25,000 chips (~3,750 H100e), and Enflame has shipped 80,000 chips (~15,000 H100e). Lastly, MetaX and Biren account for 25,000 chips (~6,075 H100e) and 12,000 chips (~2,304 H100e) respectively. Other companies like Tsingmicro and Sunrise are also known to have shipped at least 10,000 units, but specifications on their chips and the actual number of orders are impossible to find.

Omitting the smallest companies (Tsingmicro, Sunrise, and others in their league) with no public specs, the total compute derived from domestic Chinese companies comes to about 904,000 H100e, with 90% confidence in a range of 560,000 H100e and 1,100,000 H100e. The larger range is due to uncertainty of H100e conversion factors for chips, especially Huawei’s, and some uncertainty on exact unit counts. The full analysis of the error bar is included here. This estimate is likely more accurate than the estimates for smuggled chips or remotely accessed compute, as their numbers can be derived directly from company reports. Some of the companies listed also intend to go public in the near future, and their IPO prospectuses could more clearly reveal their revenue streams and shipment volumes. These documents would help us better gauge the amount of compute Chinese companies are actually able to make.

However, there is significantly more uncertainty that Chinese AI chips can actually deliver on their promised specs. Until we get Huawei hardware on ClusterMAX, it’s an open question just how good Huawei’s chips and accompanying software really are.

Remote Access Compute

This estimate is the mother of all uncertainties. Estimating how much compute China can remotely access via the cloud is a gargantuan task, as controls against remote access are weak-to-nonexistent and actors can easily skirt them.

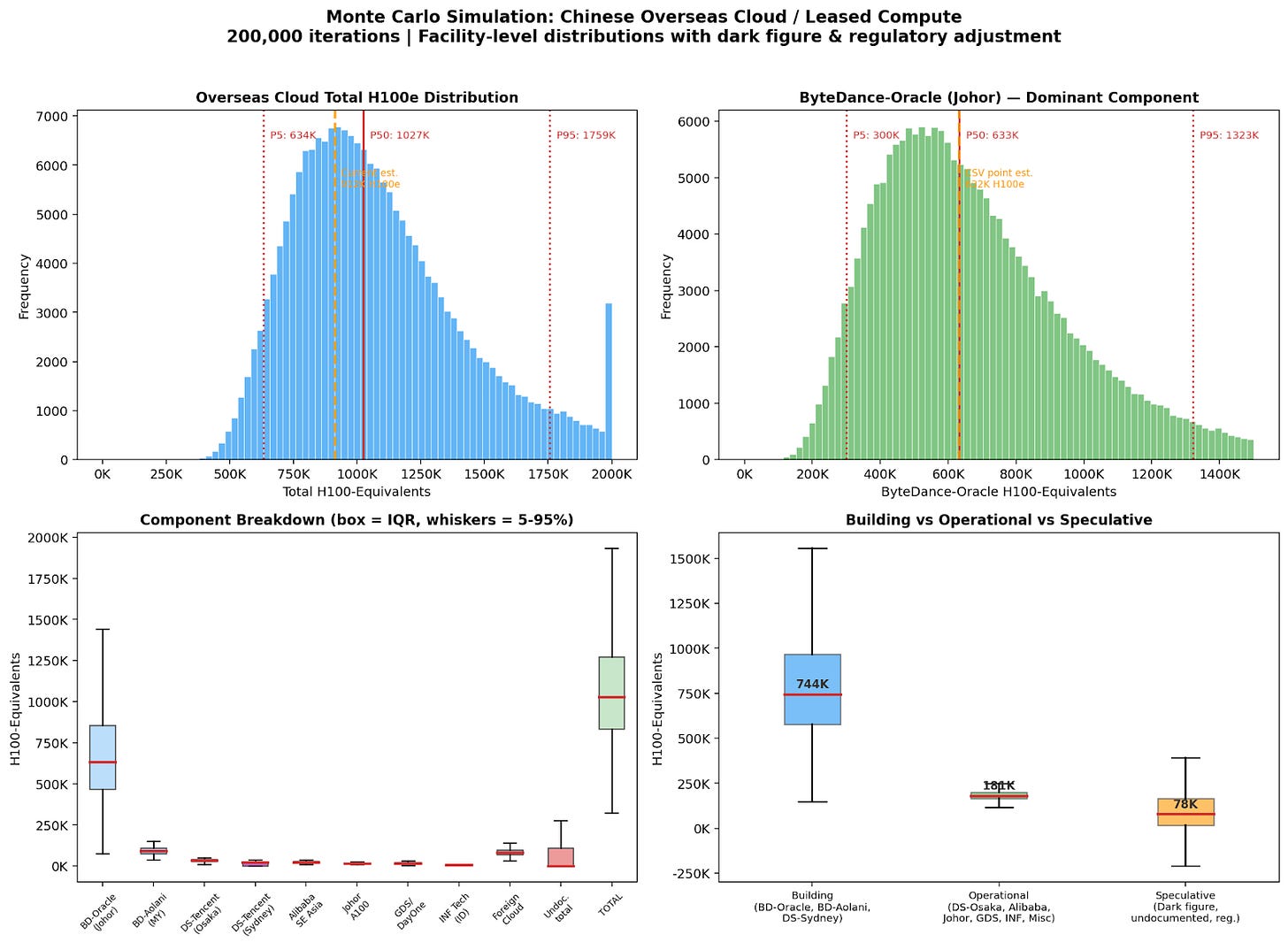

I calculated a total for remote access with another Monte Carlo simulation, which produced a median estimate of roughly 1,026,000 H100e, with high variability. The range for this estimate is between 600,000 and 1,800,000 H100e.

In order to do this calculation, I factored in the range of possible compute at dedicated clusters built by Chinese entities abroad. The biggest variable is the ByteDance-Oracle Johor cluster, and I also included ranges for projects by Tencent and Alibaba throughout Southeast Asia. Some facilities, like INF Tech, have confirmed counts of GPUs, so their range is tightened. I also accounted for similar ranges for American, European, and other Asian data centers, and those ranges were predicated on tender documents and other reports. I also included a range for a multiplier to account for undiscovered compute access. For transparency, in addition to the .py file linked above, the calculation details are included in a .csv file with explanation in an .md file, located here. Further research will almost definitely narrow the range of compute for different projects and data centers, thus tightening the range for the total estimate.

Source: ClaudeCode

More research hours should be dedicated to following the paper trails of companies collaborating on data center buildouts, particularly in Southeast Asia. This is also where mandated reporting of Chinese customers via a KYC scheme or through the enactment of legislation like the Remote Access Security Act could have the most impact. From my own conversations, the extent of Chinese access to neoclouds is the least understood, and also an area where further research can lead to needed information. I have heard many stories of dogged requests by Chinese customers requesting to access neocloud compute: how many neoclouds are accepting the offer, and how much compute does that total? The answer is important and may skew the above estimate greatly.

The size of the median estimate may be shocking to some, as it surpasses the size of compute from all other sources. The projects in Southeast Asia are the largest contributor to this, especially as they contain leading-edge chips which would be far more powerful compared to legally purchased Nvidia chips or indigenous Chinese chips. (See the recent reporting from The Wall Street Journal on ByteDance’s collaboration with Nvidia via Aolani Cloud). These chips may then be remotely accessed by Chinese labs, effectively rendering export controls on such chips impotent. However, the plain number does not properly explicate the compute’s usefulness; due to latency requirements, Chinese actors likely cannot utilize this compute pool for large-scale training purposes.

Final Calculation

Adding it all together, this calculation reached a median estimate of 2.8 million H100e. For context, Epoch AI estimates the cumulative compute by leading chip designers to total 20 million H100e. This suggests that China has access to more than an eighth of the world’s compute; the U.S. would presumably have some level of access to the rest (subtracting the marginal compute used exclusively by non-American labs). Some of China’s compute — the portion able to be remotely accessed — would presumably be accessible to American customers as well.

This calculation simply tallies the number of chips sold to China in recent years and the number of remotely accessible ones. However, the true number may be dramatically different in either direction due to uncertainty in the smuggling and remote access numbers. This estimate also does not take into account the number of chips that have become inoperable after burning out from usage. Having politely asked all four major American cloud providers, unsurprisingly none will give us any color on just what percentage of their compute is serving China-headquartered firms.

To get a better understanding of these numbers would require an act of Congress or the Commerce Department to implement the Know-Your-Customer regulation floated by the Biden administration in January 2024, as well as some mechanism to force Nvidia to tell the government who is using the non-US headquartered neocloud compute in Southeast Asia. Creative policymaking could also create new methods for the government to access CSP customer data without violating privacy rights or exposing CSPs to excessive liability. Brainstorming methods to minimize the governmental invasion of privacy while also securing the information relevant to national security should be a key focus of lawyers and policymakers.

If you liked my attempt at a bottom-up calculation, be sure to tune in tomorrow for Nick Corvino’s article estimating from the other direction.

Helpful analysis. Regarding "The plain number [of compute for remote access] does not properly explicate the compute’s usefulness; due to latency requirements, Chinese actors likely cannot utilize this compute pool for large-scale training purposes" -- I wouldn't think latency between China & overseas datacenters is a barrier for training, and even for inference purposes it shouldn't be the main blocker for most use cases? Regulatory restrictions on exporting Chinese user data overseas is likely the bigger barrier for training models overseas

This gov speech (https://www.nda.gov.cn/sjj/jgsz/jld/llh/llhldhd/0323/20260323202204680553721_pc.html) claims that by end of 2025, China's AI compute reached 1,590,000 PFlops. Does this affect your estimate?